On 20 July 1969, about three minutes into the Lunar Module's powered descent to the Sea of Tranquility, a caution tone chimed in the cabin and the amber display in front of Neil Armstrong lit up with a four-character alarm code. 1202.

"Program alarm," Armstrong said. Four seconds later, in the same flat tone: "It's a 1202."

In Houston, a 26-year-old guidance officer named Steve Bales had to decide, inside the next few seconds, whether to abort. He had not seen the alarm in a real mission. Two days earlier, in a simulation run without warning by the flight director, a similar code had been thrown at him and he had called for an abort when he should not have. The flight director, afterwards, had asked quietly for every alarm code to be on a single sheet of paper before launch, with GO or NO GO beside each one.

A 24-year-old support engineer named Jack Garman had spent that weekend writing the list. When 1202 flashed on Eagle's display and Bales called through on the voice loop, Garman's eye was already on the row. He said go, as long as the alarm did not keep recurring. It did recur. Three more times. Plus one 1201. Across roughly four and a half minutes of powered descent the spacecraft kept flying, the computer kept shedding the tasks it could afford to shed, and a sensor probe beneath Eagle's landing pad finally touched lunar dust.

I am a retired specialist anaesthetist. For thirteen years I sat at the head of operating tables in New South Wales. I have since spent my time writing software and reading the history of the machines that were built to keep people alive. I cannot think of a better piece of engineering history for explaining what a good anaesthetist actually does when things go wrong than what the Apollo Guidance Computer did that afternoon.

What a 1202 actually was

The Apollo Guidance Computer had to run dozens of different computations at different rates on the same small machine. Attitude control at a hundred cycles a second. Navigation at two. Display at five. Radar, whenever the radar felt like producing data. A single-threaded loop was the obvious way to run all of that, and it was also the wrong way, because one long computation would lock out every other task, and a blocking computation during a lunar landing would leave the vehicle uncontrolled.

The solution, designed at MIT in the mid-1960s by a man named Hal Laning, was priority scheduling. Every task in the system had a fixed priority. When one task finished, or when a hardware interrupt arrived, the scheduler looked at the queue of pending work and picked the highest-priority item to run next. If the queue ever filled past the working memory available to service it, the scheduler did not stall. It dropped the lowest-priority waiting tasks, wrote a program alarm to the display, restarted the critical guidance and control loops out of a protected area of memory, and kept running.

Laning had written that behaviour years before it mattered, for situations he could not then specifically anticipate. On the afternoon of 20 July 1969, a rendezvous radar switch had been left in the wrong position on the Lunar Module's panel. It was generating about twelve thousand phantom interrupts every second, consuming roughly fifteen per cent of the computer's throughput on top of a descent load that was already near the machine's limit. Without the priority scheduler, the landing would have failed. With it, the computer shed its low-priority work, told the humans it was under stress, and kept the guidance loop locked onto the landing site.

But 1202 was not an abort. It was a status report. The machine was telling the crew: I am under load, I am shedding the parts I can afford to shed, I am still flying the most important thing.

What I did at the head of the table

Let's say a routine anaesthetic. A 58-year-old woman, knee replacement, low risk. She is asleep, breathing through a tube, monitored for the usual half-dozen physiological signals. The surgeon is making small talk. I am writing in the chart, titrating a low-dose infusion, replying to an email from theatre coordination, and half-listening to the radio.

Her blood pressure falls. Not dramatically. Twenty points.

The first thing I drop is the chart entry. The second is the conversation with the surgeon. The third is the email. I give a small dose of vasopressor, watch the response, read the end-tidal carbon dioxide trace, look at the surgical field, ask the scrub nurse to recycle the blood pressure cuff.

Her pressure falls further. Her heart rate climbs. Her oxygen saturation begins to drift.

Now I drop the non-critical infusion titration. I drop the small talk entirely. I drop the half of my attention that has been monitoring the rest of the theatre. What is left is the airway, the breathing, the circulation, and the set of hypotheses competing for my attention about what is making those three worse. At the peak of the crisis, I am running two or three tasks. None of them involves anyone else in the room.

From outside, this looks like inactivity. The surgeon asks a question and I do not answer. A nurse asks a question and I answer in two words without looking up. A student anaesthetist once told me, after a case that had gone badly and recovered, that she had thought for a moment I had frozen. I had not. I had shed load.

What the room sees is not what the anaesthetist is doing

Most people in an operating theatre have never seen a real anaesthetic emergency. Most anaesthetists see one or two a year. When it happens, what looks to the room like someone who has stopped paying attention is a priority-scheduled executive running inside a 45-year-old human brain. The scheduler is not conscious. You cannot run it while also entertaining the registrar's weekend plans. You cannot run it while also being polite.

The alarms on my machine are 1202s. They are information, not aborts. The ventilator beeping at me is telling me the patient's tidal volume has dropped. The pulse oximeter beeping at me is telling me something about gas exchange is worse than it was. Neither alarm is, by itself, an instruction. The instruction comes from me, built from the pattern of alarms, the bedside picture, the case history, and the thirteen years of pattern recognition behind my eyes. I know which alarms to act on immediately and which ones to note and carry on through, because I have a short set of laminated cognitive aids on the wall behind my workstation, with the answers already written down for the crises I cannot afford to work out on the fly.

Those laminated cards are the direct descendants of Jack Garman's ballpoint-on-lined-paper sheet, slid under the plexiglass on a console in Houston, in July 1969.

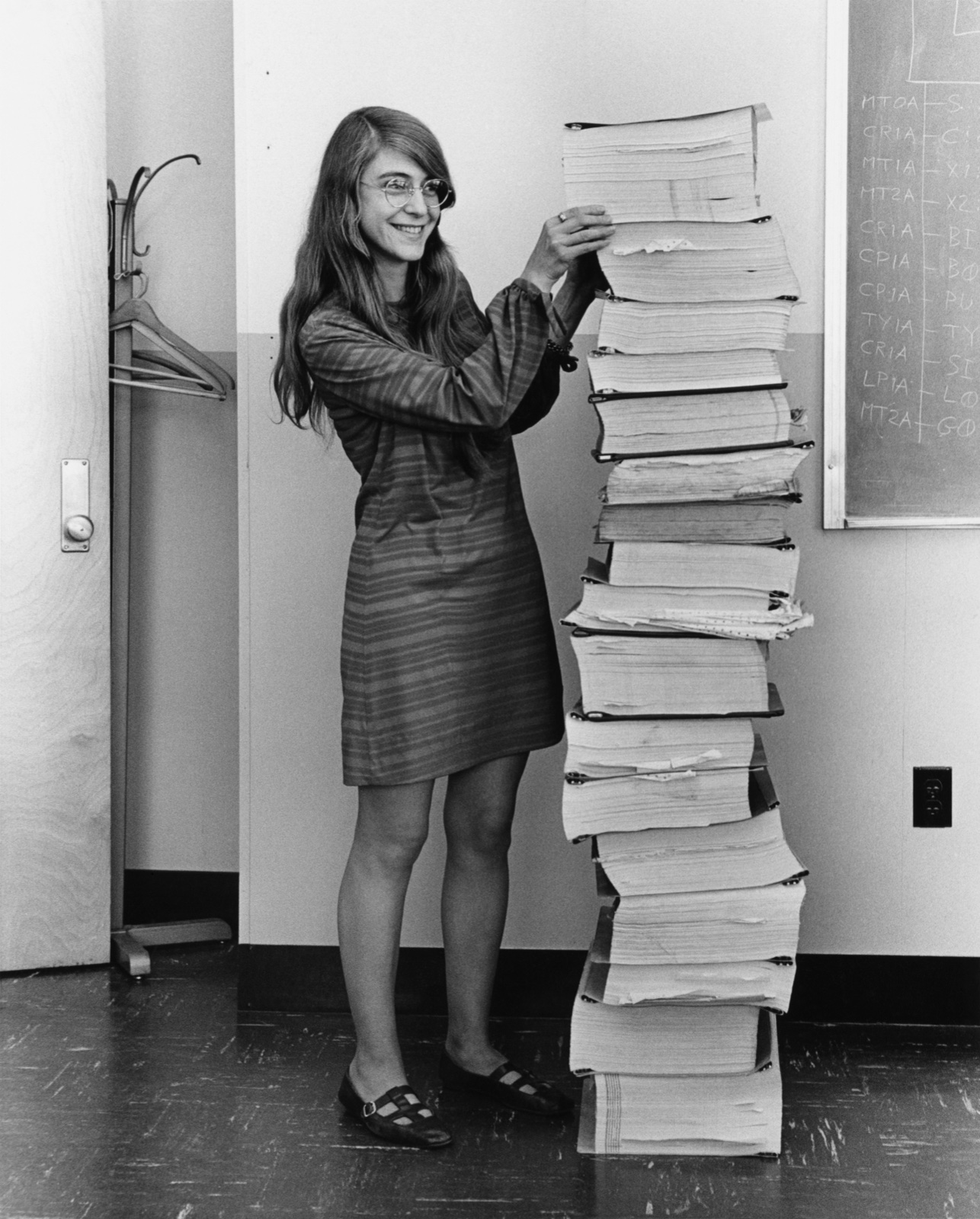

What Margaret Hamilton had to name

The software on the Apollo Guidance Computer was written under the direction of a woman named Margaret Hamilton, who was 31 when the Lunar Module landed. Around 1965 she started using a phrase the hardware engineers in her laboratory thought was a joke. She called what her division did software engineering. Her reason was rhetorical. She wanted a word that would force the rest of the laboratory to take seriously the discipline of specifying, reviewing, verifying, and releasing the code on which a human life would depend. The word "programmers," in the culture of the laboratory, meant clerks with codebooks. The word "engineers" meant people who built hardware. Hamilton wanted a word for the people doing the work that the hardware had been built to do.

The tease eventually stopped. The phrase went international in 1968, when a NATO conference in Bavaria put it on its letterhead. By the time Armstrong's boot touched the lunar surface, there existed, for the first time, a discipline capable of producing software to the standard a 1202 alarm required.

Medicine has no equivalent word for what happens at the head of the operating table when a patient deteriorates. We have "crisis resource management" and "non-technical skills," both terms imported from commercial aviation in the 1990s. We do not have a native word for our own cognitive work under load. As a profession we still tend to talk about it as a character trait. The good anaesthetist is "unflappable." The good anaesthetist "keeps her head." The good anaesthetist is "the one you want in the room when things go wrong."

That is not engineering language. It is the language you use when you do not have an engineering discipline and are praising the rare individual who has built one for themselves.

What medicine resisted

The cognitive aid revolution in anaesthesia is a fifteen-year-old story and it is not yet finished. There have always been some aids in the room. Most Australian theatres have kept a malignant hyperthermia box on the wall for thirty years, with dantrolene reconstitution instructions on the lid and a small stack of role-allocation task cards inside to hand out when the room fills up. A local anaesthetic toxicity poster has been on the wall since the late 2000s. The Vortex Approach to the unanticipated difficult airway, developed in Australia by Nicholas Chrimes and colleagues, gave us a CICO algorithm card that now sits on the back of most Australian anaesthetic machines. A more recent push, led by the Sydney anaesthetist Rob Hackett from around 2017, has put the wearer's name and role on the top of every scrub hat in many of our theatres, so that during a crisis the lead clinician can call David Anaesthetist or Clare Anaesthetic Nurse by name rather than gesturing at the back of someone's head.

What was missing, until much more recently, was the systematic catalogue. Atul Gawande's Checklist Manifesto came out in 2009. The Ariadne Labs Operating Room Crisis Checklists followed in 2013 and the Stanford Emergency Manual in 2014. Before those, the long tail of less common anaesthetic crises that did not have a dedicated card of their own were managed from the anaesthetist's memory alone.

The argument against the comprehensive crisis manuals was the same one cognitive aids had always faced. I am a specialist. I do not need a flowchart. If you think I need one, you do not understand what I do. It was the same argument Hamilton's colleagues had used against "software engineering," and it was wrong both times, and for the same reason. Under load, human working memory collapses. Seven plus or minus two drops to three plus or minus one. You are not processing at your leisure. You are priority-scheduling in real time, and the quality of the scheduling is the quality of the care.

A 24-year-old in Houston wrote the alarm-code cheat sheet in July 1969 because the flight director refused to fly the mission without one. The flight director was not insulting his guidance officer. He was engineering the system. He had decided, correctly, that it was unacceptable for a lunar landing to depend on a 26-year-old recalling from memory, under stress, the meaning of every alarm code his computer could throw at him.

Medicine is still, in 2026, arguing about whether the equivalent cheat sheet belongs on the theatre wall.

What I want you to take from this

1202 is not an abort. It is a status report. The machine is telling the humans in the loop: I am under load, I am shedding the tasks I can afford to shed, I am still flying the mission. If you see it on the display, you do not panic. You check whether it is recurring. You check whether the guidance loop is still locked. You go.

When I have watched anaesthetists fail in a crisis, it has almost never been because their hands did the wrong thing. It has been because they treated every alarm as an abort, or they did not treat the first alarm as anything at all. Neither response is the one that gets the spacecraft to the surface. The one that works is the one Hal Laning wrote down in 1965. Shed load. Announce yourself. Keep flying the most important thing.

The Apollo Guidance Computer is the best teacher operating theatres have ever had. Almost none of us know its name.